This post is special to me. It continues a story that dates back to 1998–99.

The post is a combination of many things. Above all, it is a symbolic message. This film is meant for individual interpretation; however, at the end I will explain what the author intended but step by step.

The film was created entirely using artificial intelligence. Every frame, voice, video effect, and sound you see or hear was generated, nothing was recorded, and no real photographs were used.

The only real element I used in this film is the narration. The text was written over 25 years ago in Polish and serves as the introduction to a longer story I wrote. This is how “A Tribute to the Freedom of Time and Love” begins. So far, only four people have read it, and it will remain hidden on a shelf. The only thing I wanted to share was the very beginning a symbolic message open to personal interpretation.

Nowadays, it is easier and more convenient to visualize ideas. I could have composed a film from material collected over months, but this was also a kind of journey for me.

The film was created entirely using AI. The text was my foundation. At the very beginning, I used a custom agent in ChatGPT that organized every second of the clip. It generated a detailed timeline along with prompts for Midjourney, which became the basis for generating video materials.

After the text came the voice, actually three voices. Initially, I planned to use HeyGen, but ultimately I relied only on ElevenLabs. There I created the narrator track as well as male and female voices. I also generated several sound effects there, such as a heartbeat and the echo of a passing train.

Once I had the text and voices, I generated the musical background. In Suno, I created two songs: the first calm and nostalgic, the second fast and epic. The soundtrack was modified depending on the film’s action, and during editing I did not strictly follow rules such as keeping audio below -3 dB, the sound was adapted to the narrative.

After the music came the video. I used tools such as Kling, RunwayML, Google Veo, ChatGPT, ElevenLabs, Midjourney, PixVerse, and even Generative Refill in Adobe Premiere.

While the beginning was intentionally broad and less visually consistent, the second part relied on continuity between shots, with each frame continuing from the previous one. The opening and closing shots helped achieve overall visual cohesion.

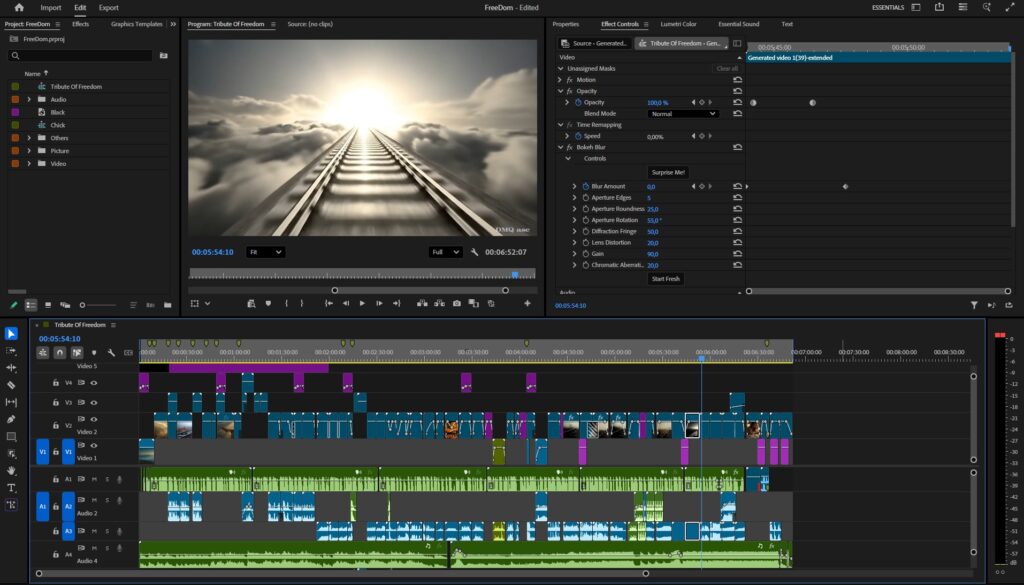

The video material was assembled in programs such as Adobe Premiere, where the film was edited; After Effects, where I added several effects; and Photoshop, where I enhanced quality and maintained proportions.

This film is unusual because it was created entirely with AI. From experience, I know that the best translation between reality and artificial creation is a real element extended artificially, for example, two real frames (beginning and end) with a generated film in between.

It was created in 720p and 1080p at 24 fps, ultimately finished in Full HD.

Today, creating a 15-second clip is no longer a challenge. Many things are being produced, and we have increasingly powerful tools. Here, I wanted to achieve exactly what I envisioned. The film was meant to be symbolic and it became exactly that.

Many shots are misty, darkened, backlit, or layered with overlapping visuals and audio tracks.

In general, I would like each viewer to extract their own symbolism from this clip for everyone to find their own meaning rather than a single defined message. Something beyond an eagle that may not actually be an eagle, and a nest that represents two different places.

And what did the author intend?

The whole piece is divided into two parts. The first is calm and nostalgic. The tracks and the walking man symbolize our life path. We walk forward, confident in our beliefs.

The eagle is another symbol, mainly a bird against a vast landscape. It does not have to be an eagle and is not exactly one; it could be a stork or a dove. The intention was composition and a sense of order.

The mouse, an insignificant page of history, yet still an important being. The chick, the goal of sustaining the species.

Then this order is disrupted.

A man walking through life loses control and disturbs the balance. One decision becomes the foundation for changing history and affects the surroundings.

The chick feeding on its parent in order to survive.

Escape becomes limitation. Breath, heartbeat, fog. We cannot leave the tracks and become trapped in our own decisions, asking “why” at the end even though it was our own choice.

This film does not have to be tragic; it is a dream. It is a reflection encouraging us to slow down and think about more important things in life than simply moving forward… along the tracks.

If you enjoyed the film and feel like sharing it, thank you very much. I am curious about how others perceive it perhaps you interpret it differently. The film will also be published on my website and on LinkedIn.

I also plan to create an avatar in HeyGen and another clip, but this time not using API systems instead locally on a PC using ComfyUI, since increasingly better models are appearing there, generating not only video but also audio and 3D models.

How long did it take and how much did it cost?

The film took seven days, about two hours per day.

The process involved generating voices, video, and effects, and assembling them continuously about one minute of finished film per day. I used several AI platforms and supported myself with a VPN, although most of the material was created in Veo. I could have finished it in a single day, but spreading the work over a week allowed me to introduce corrections and changes continuously. Sometimes, while finishing the film, I modified the beginning to maintain consistency of the overall message.

The final film contains 55 short video shots, 5 graphic frames, 2 music tracks, 6 voice layers, and 7 sound effects. Optical flow was applied to improve motion smoothness.

The clip cost me 0 PLN to create, although I had access to subscriptions such as ChatGPT, Midjourney, ElevenLabs, Kling, and the full Adobe suite. Multiple accounts allowed me to accelerate the process, which is why I am increasingly moving toward the ComfyUI environment to become independent from API-based systems. For people unfamiliar with new technology, this can be either frustrating or expensive. Not every frame turns out as intended, which doubles or triples the number of attempts. Additionally, lack of knowledge in the AI environment is sometimes exploited by so-called “AI training specialists,” while at the same time the boundary between authentic and AI-generated content is becoming very thin. Deepfakes and scams are already a reality, a single photo and 15 seconds of voice are enough to create a fully convincing video conversation.

Therefore, AI is not only a support system in graphics, it improves quality and convenience in life, but it is also a tool that requires awareness and up-to-date knowledge to remain safe.

Returning to the film from the post, there is a screenshot below of the final version exported from Adobe Premiere.

The selected graphics demonstrate my skills in transforming and creating visual effects for people, objects, or landscapes based on original material. The final result is subjective and reflects a specific timeframe. Each of us perceives things differently and has different amounts of time and skills available. Regularly published posts with specific themed projects aim to systematically collect materials showcasing graphic possibilities.

Enjoy watching!